I. Results

A. Results Regarding Radiation

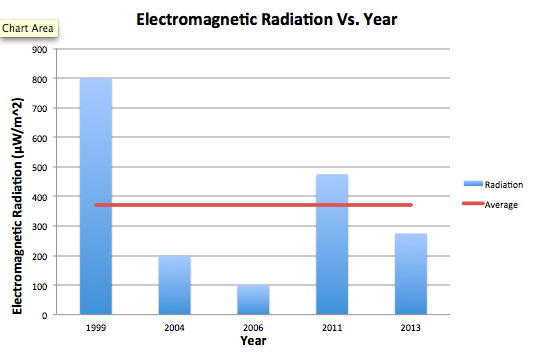

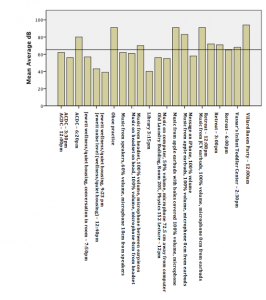

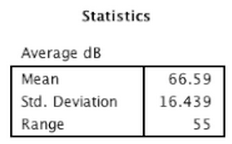

Overall, all microwaves measured emitted a fairly similar amount of radiation, ranging from the weakest emission at the furthest point from the microwave at 30 µW/m^2 to the strongest emission at the closest point to the microwave at 1,500 µW/m^2. While the general trend seemed to be that radiation decreased as we moved further from the microwaves, not all cases followed this trend exactly. To further understand factors that may have affected radiation, we compared radiation with 1) the power levels of microwaves and 2) the age of microwaves. The following graphs show this comparison, with an added average line to see which microwaves fall below and above the average. The graphs plot the Average values from the front at 30 cm. We chose this orientation and distance because we felt it is the most practical in reference to where a person would be standing while microwaving food. Furthermore, since some microwaves emit a relatively consistent amount of radiation regardless of distance from the microwave, and others taper off, we felt this represented a good middle ground.

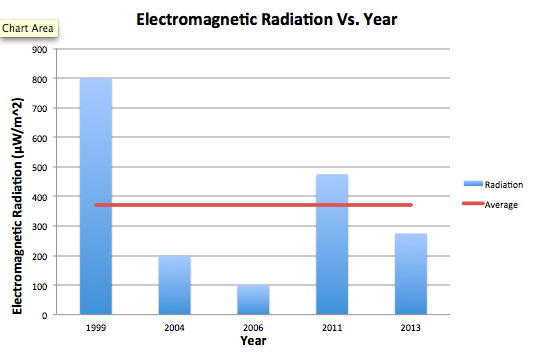

i. Microwave Age

It is certainly notable that the oldest microwave (M4) emitted by far the most amount of radiation. However, as the years progress, the general trend after that case does not necessarily descend. A microwave from 2011 also emitted a significant amount of radiation higher than the average. It is also notable that when taking measurements of the 1999 microwave, the only industrial microwave in our sample, the radiation emitted seemed to appear in cycles. It would drop down to zero, rise consistently, then fall back to zero. This cycle made it difficult to choose an average from the reader, and also perhaps accounted for our perceived higher average radiation. While microwaves may have become more advanced over the years, therefore emitting less radiation, we would need a larger sample and to keep power levels constant to test this hypothesis further.

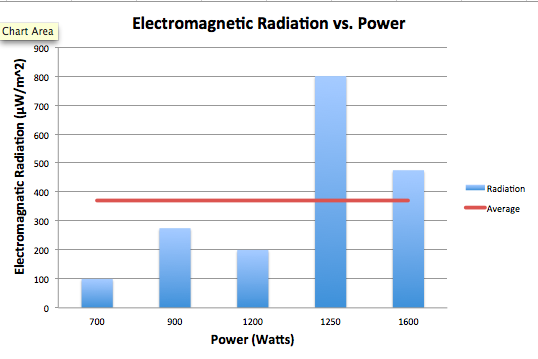

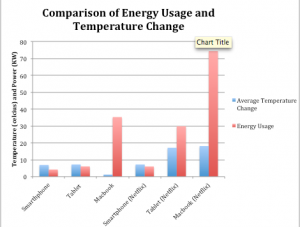

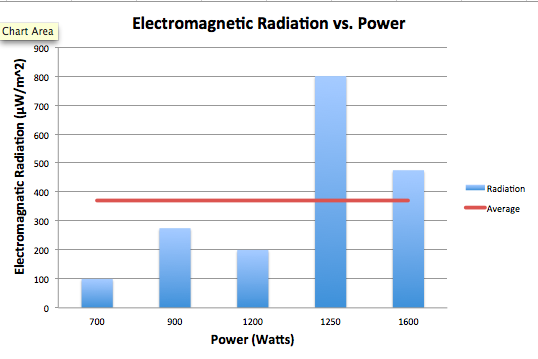

ii. Microwave Power

The association between power, in Watts, and electromagnetic radiation seems to be a positive one. The microwaves that, on average, emitted the most radiation also were the highest in power, exceeding the average. The microwaves that emitted radiation below the average were lower in power. The exceedingly high spike in EM radiation in the 1250 Watt microwave may be influenced by other factors, considered in section i., but is significant nonetheless. While again we would need a larger sample and more controlled conditions to test for a correlation between these two variables, there does seem to be a positive relationship.

This microwave, M4, was the oldest microwave in our sample and also the only non-commercial microwave in our sample, as evident from its unconventional aesthetics. While it emitted by far the highest amount of radiation, it provided an interesting case to investigate further.

B. Microwave Radiation and Safety Standards

The EM radiation data values were all significantly below the safety standards set for consumer microwaves. (See Section II.) Across variables of age, power output, wear and tear, and added interference, no microwaves at any point even exceeded half of the safety limit set by the United States Federal Food and Drug Administration.

C. RF Meter Findings

Through our data collecting process, we also went through trials and tribulations with the RF meter, an instrument used to measure EM radiation. Since the manual was relatively vague, we performed a lot of trial and error to get the most accurate measurements possible. We had a few helpful findings that may be helpful to individuals using this instrument moving forward.

First, we found it is necessary to take a preliminary measurement of EM radiation in the general vicinity of the appliance being measured. This interference may account for unforeseen variance and unexpected values in a data set so it is good to know if it exists or not. Furthermore, it is important to keep nearby cell phones and laptops off, as they can also interfere with these measurements.

Second, we found it more accurate, in our case, to measure from one axis rather than all three. This limits interference from other directions, and therefore leads to a more accurate measurement of the appliance itself.

Finally, we found it important to use different settings of the RF meter to measure different values. While we began measuring just on the “Maximum Average” setting, we soon realized that this was an unreliable measurement for getting a sense of the general radiation emitted. Furthermore, the highest calculated “Maximum Average” value remains on the RF meter until a higher radiation is detected. Therefore, if something interferes for even a second, its value will be displayed and remain displayed on the RF meter. We decided that although this value is telling, we also needed a more reliable, general idea of emission. We decided to use the “Average” setting for this, which averages the values every few seconds, and displays that reading. Since the reading constantly changes, we watched the meter in pairs and decided a number that seemed to be a middle-ground of the readings we observed. These settings are important to test and understand so that the operator is truly measuring what they are meaning to measure.

II. Results Analysis and Conclusions

The process of generating high levels of heat through the use of microwaves does mean that contact with human tissue and organs is potentially lethal. Despite this, there are few health risks posed by common consumer microwave ovens due to strict safety standards and efficient interlocking technology.

First, the International Electrotechnical Commission has set a standard of emission limit of 50 Watts per square meter at any point more than five centimeters from the oven surface. The United States Federal Food and Drug Administration has set stricter standards of 5 milliWatts per square centimeter at any point more than two inches from the surface. Most consumer microwaves report to meet these standards easily. Further, the dropoff in microwave radiation is significant with the FDA reporting “a measurement made 20 inches from an oven would be approximately one one-hundredth of the value measured at 2 inches.” As the majority of our readings, despite substantial electromagnetic interface at times, were in the microWatts range these standards appear to be successful.

Second, microwaves use a two-step interlock system that ensures the magnetron cannot function while the door is open. This means little radiation leaks, and opening the door even when the oven is turned on will immediately shut off all microwave radiation emission. Our readings did suggest higher levels leaked from the right side of the oven (near the magnetron) than from the door, but these levels remained well below international standards.

Our results are not overly surprising as we entered the experiment recognizing the strict safety standards in place for microwave oven radiation levels. Thus, our data supports our hypothesis: standard consumer microwave ovens do not emit microwave radiation levels anywhere near levels needed to be dangerous to the user. While not all of our data is as logical, when graphed, as expected, we believe this to be a result of our imperfect data taking conditions and irregular interference levels. Should we continue to record data in more controlled conditions, we believe the outcomes would continue to adhere to safe standards.

III. What Science Did We Learn?

Microwaves are high frequency radio waves used for many purposes from television broadcasting to kitchen cooking. Microwave ovens are common household items that generate microwaves (around 2450 MHz) using a metal tube called a magnetron. These microwaves are directed into the oven cavity – a space of metal walls, roof, and ground with the exception of the oven door. Metal totally reflects microwaves, creating high bounce back in the oven, while glass and some plastic is nearly transparent to microwaves, allowing the waves to be readily absorbed into food (especially those largely water based). Microwaves force atoms to reverse polarity at high enough rates to generate heat and, thus, cook the food or boil the water.

While the radiation of microwaves can be dangerous, microwave ovens adhere to safety standards that prevent harm with proper use and maintenance (if an oven door is damaged, more radiation may leak out). While microwaves have been shown to break down key nutrients in some food, the process does not radiate the food or make it dangerous to eat, only less healthy.

IV. For the Future…

If we had to do this project again, we start by testing the RF meter and its functions before gathering data with it to gain a better understanding of its diverse (and sometimes unintuitive) settings (Max Avg vs Avg, etc etc). We collected some surprising data from the industrial microwave (M4) tested; the radiation levels fluctuated from almost nothing to nearly 2.5mW/m2 (Max Avg), which is a huge range! Perhaps this was partially caused by the base-level radiation (this was the only microwave whose surroundings had a measurable electromagnetic field without being on), perhaps we didn’t have a complete handle of the RF meter, or perhaps the microwave functioned in a different way from the others, sending out pulses instead of a steady stream of microwaves and so creating the oscillation in our readings. Should we do the project again, we would make sure we knew precisely how all data the RF meter takes is taken, and we would research further the different mechanics of microwaves to better explain surprising data such as this.

V. Next Steps

If we had to extend this project, we would test a wider variety of microwave models: it would be interesting to test the trend of microwave emission over the years, gathering data and testing a hypothesis concerning the technologic improvement being made in microwave development. It would also be interesting to test industrial microwaves versus commercial microwaves, seeing as how M4 differed so greatly from the other microwaves. We would also be sure to widen our sample selection to more reliably test some of the relationships we have preliminarily identified. While the relationship between microwave power output and EM radiation seemed promising, we would need to test this hypothesis in a bigger sample, with more controlled conditions, to find a mathematical formula to describe this relationship.

Emma Foley; Hunter Furnish; Hannah Tobias

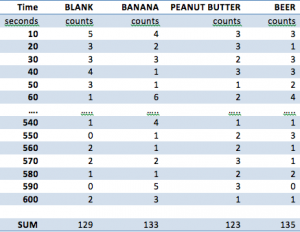

![Figure 6: Data collected [updated]](http://pages.vassar.edu/ltt/files/2014/02/Updated-Data-1024x452.png)